CVPR 2026 – Paper (LUMINA Breast Cancer)

What if the biggest problem in breast imaging AI is not the model—but the machine that took the image?

That is the bold question behind LUMINA, a new paper by Dr. Ulas Bagci and colleagues, recently published at CVPR 2026, one of the world’s premier venues in artificial intelligence and computer vision.

In medical AI, everyone wants smarter models. Bigger models. Faster models. More “intelligent” models.

But Dr. Bagci’s team turned attention to something more fundamental: what happens when the same disease looks different simply because it was captured by different mammography systems?

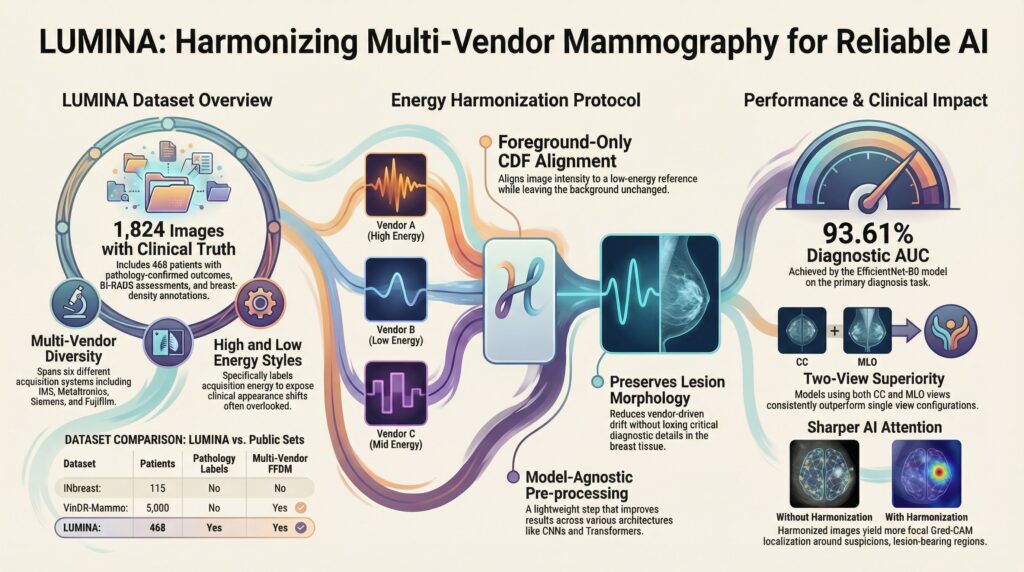

This is where the story begins. The team introduced LUMINA, a new multi-vendor full-field digital mammography benchmark built to reflect a major real-world challenge in breast imaging: domain shift across scanners, vendors, and acquisition energy profiles. The dataset includes 1,824 mammograms from 468 patients, with pathology-confirmed benign and malignant labels, as well as BI-RADS assessments and breast density annotations. It spans six acquisition systems, making it a rare benchmark designed not for an ideal laboratory setting, but for the messy reality of clinical deployment.

But the paper does more than introduce a dataset. It also proposes a deceptively simple idea with powerful consequences: harmonize the image before asking AI to interpret it. Rather than relying on heavy model-specific adaptation, the authors developed a foreground-only pixel-space energy harmonization protocol that aligns mammograms across vendors while preserving lesion structure. In plain language: it helps AI stop getting distracted by how the image was acquired, so it can focus on what actually matters—the tissue, the lesion, the patient. The paper shows that this harmonization step consistently improves model performance and even leads to more focused Grad-CAM attention maps, suggesting not only better accuracy, but better localization of suspicious regions.

The results are striking. For breast cancer diagnosis, the best two-view model reached an AUC of 93.54%. For BI-RADS classification, EfficientNet-B0 achieved AUCs above 92% in the two-class setting, while Swin-T delivered the strongest density prediction performance, reaching a macro-AUC of 89.43%. Across tasks, the message was clear: when the input becomes more consistent, the intelligence becomes more reliable.

At its heart, LUMINA is about a shift in mindset.

The future of medical AI will not be built only by inventing larger networks. It will also be shaped by building fairer benchmarks, cleaner harmonization pipelines, and more realistic tests of generalization. In that sense, this paper is not just about mammography. It is about the next phase of healthcare AI: moving from impressive prototypes to systems that can actually survive contact with the real world.

And perhaps that is the real innovation here:

not teaching AI to see more,

but teaching it to see through variation.

Here is the paper’s preprint: https://arxiv.org/pdf/2603.14644